How Did Epidemiologists Get It So Wrong? Part 3 of 3

In my first post in this series, I outlined the three key mistakes that I believe epidemiologists have continued to make throughout the pandemic:

1. Over-estimation of the impact that policy has on the dynamics of the pandemic.

2. Lack of emphasis on non-policy induced behavioural change as a mechanism of transmission reduction.

3. Exaggeration of confidence levels when making forecasts.

I covered the first and second of these in my previous posts, and I will cover the third today. This will (hopefully) be the last thing I write about Covid, as we are fast approaching an endemic equilibrium.

3) Exaggeration of confidence levels when making forecasts.

One of the golden rules which economists tend to abide by is to forego making predictions. It is akin to going to a doctor and asking them to tell you when you will die – the doctor would not be able to make an accurate prediction but could make much broader comments about your mortality risk. For example, she could tell you that if you take up smoking it will shorten your life expectancy, or that increasing your exercise would lengthen it. As a result, I have sympathy for anyone, including epidemiologists, who are tasked with making forecasts about complex systems. This being said, however, I do not have sympathy for epidemiologists who have been consistently wrong in their predictions throughout the pandemic and yet continue to exaggerate the confidence that should be placed in their forecasts. This is an example of Hayek's pretence of knowledge problem, where forecasters mistakenly think that their models tell them more about the world than they actually do, and consequently place misguided levels of faith in them.

Neil Ferguson has been one of the most vocal epidemiologists in the UK throughout the pandemic, and rarely one to shy away from making a prediction about the pandemic. His paper with others from Imperial, where 500,000 Covid deaths in the UK were predicted in the absence of NPIs, was allegedly instrumental in persuading the UK government to impose a lockdown in March 2020. This prediction is actually not problematic, and you do not need any kind of fancy model to see that. If you assume the R0 of Covid is 2.7 and the infection fatality rate is 1%, then the herd immunity threshold is 1-1/3 = 67%. So, in the UK with a population of 66 million, this equates to 44 million people getting Covid before herd immunity is reached. If 1% of them die, that is 440,000 deaths in total. One problem I do have with this prediction harks back to the previous section – people would have voluntarily changed their behaviour well shy of the herd immunity threshold. This is acknowledged to some degree in the paper, to be fair. Unfortunately, Ferguson's other predictions throughout the pandemic have been much less forgivable. I don't mean to pick on him, even though I think he is a particularly egregious offender, and I should be clear that many others have performed risibly also.

It should be noted that Ferguson was a co-author on the aforementioned Flaxman paper that found that lockdowns were essentially the only way of preventing a catastrophic outcome. As a result, I think he is biased towards this lockdown-centric view of the pandemic in his other work. There is a reason he is nicknamed Professor Lockdown. This showed in his remarks leading up to the UK's 'freedom day' on July 19th:

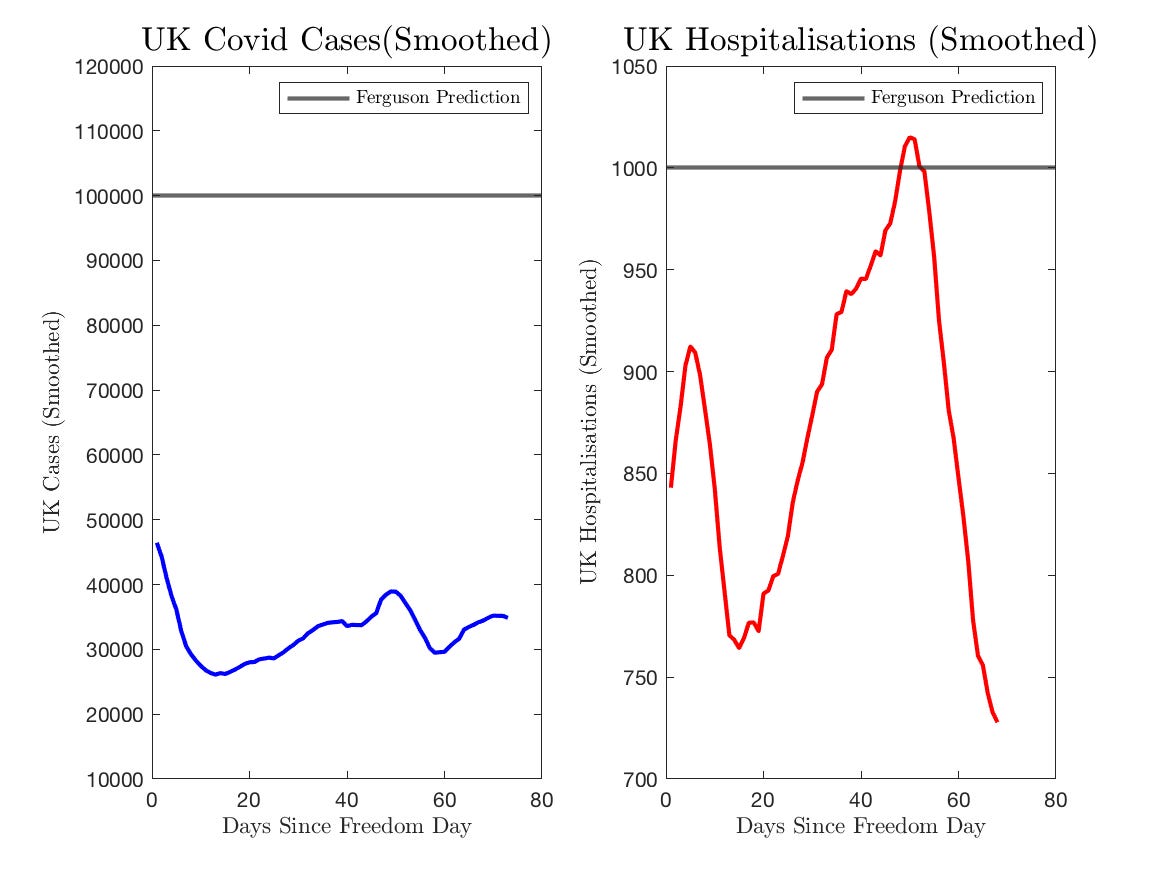

“It’ll almost certainly get to 100,000 cases a day. It's almost certain we will get to 1,000 hospitalisations a day. The real question is, do we get to double that or even higher? And that’s where the crystal ball starts to fail. We could get to 2,000 hospitalisations a day, 200,000 cases a day, but it’s much less certain.”

Note that he says "almost certainly". To me, this implies at least a 95% level of confidence. Let's see what actually happened to UK cases and hospitalisations after the dropping of restrictions.

Cases came nowhere close to 100,000 a day, and hospitalisations barely cleared his mark, albeit for a very transitory amount of time. To have that level of confidence in a prediction and be incorrect by this degree is totally laughable. When asked whether or not he stood by this prediction 10 days after freedom day, he replied that "we won’t see for several more weeks what the effect of the unlocking is." This is, once again, a ridiculous statement as the virus has a generation time of roughly 4 days, meaning that of course, we would have expected to see a spike by the time he gave that answer if he had been anywhere even remotely correct. More than two months have now elapsed and we continue to see very flat case dynamics, so this was wrong on that dimension also.

The Coronavirus pandemic has not been the first occasion for Ferguson to make incredibly inaccurate predictions about infectious diseases:

· In 2001, during the UK's foot-and-mouth outbreak, he was part of an analysis that resulted in the slaughter of 11 million cows, pigs and sheep on farms. It now seems this was completely unnecessary. He also predicted that 150,000 people could die. There were less than 200 deaths.

· In 2002, Ferguson predicted that up to 50,000 people would likely die from mad cow disease in beef. In the UK, there were only 177 deaths.

· In 2005, he predicted that as many as 150 million people could be killed from bird flu. Only 282 people died worldwide from the disease between 2003 and 2009.

· In 2009, he predicted that the outbreak of swine flu could kill up to 65,000 people in the UK. Only 457 people died from the disease in reality.

Why does anyone listen to him? You would think that this might cause Ferguson to stop making predictions, but alas no. In mid-August, he predicted that the UK faced the prospect of a "large wave of infection in September, October" that could push hospitalisations over 1000 a day. He may well be correct this time, but worryingly he does not seem to have learned anything about the follies of forecasting from his previous errors.

These deficiencies in forecasting have been ubiquitous across epidemiologists. The image below compares the predicted hospitalisations from the Warwick model provided to the government to actual hospitalisations:

For a large amount of time, actual hospitalisations fell well outside of the uncertainty bands. Here is the same for the LSHTM model:

Exactly the same degree of ridiculous pessimism was present when these modellers were asked to forecast the summer dynamics in February – bear in mind this was before the emergence of the Delta variant which makes it even worse:

According to them, it was within the realms of possibility that there could have been a summer wave that resulted in hospital occupancy more than twice exceeding the January peak. This is just completely implausible and shows how broken these models are.

It is an unfortunate premise, but there will most likely be more pandemics. Covid may have merely been the fire drill, as the emergence of a more virulent, more transmissible virus is possible. I started the first in this series of posts by saying that the criticism economics received was ultimately beneficial for the discipline. I think the same is true for epidemiology. It has taken a global pandemic to reveal the issues at the heart of the workhorse models. Bad assumptions beget bad models, and bad models beget bad forecasts and bad policymaking. Behavioural adjustment is at the heart of economics, and epidemiological models desperately need to better incorporate it if we are to be in a better position when the next pandemic does inevitably arrive.